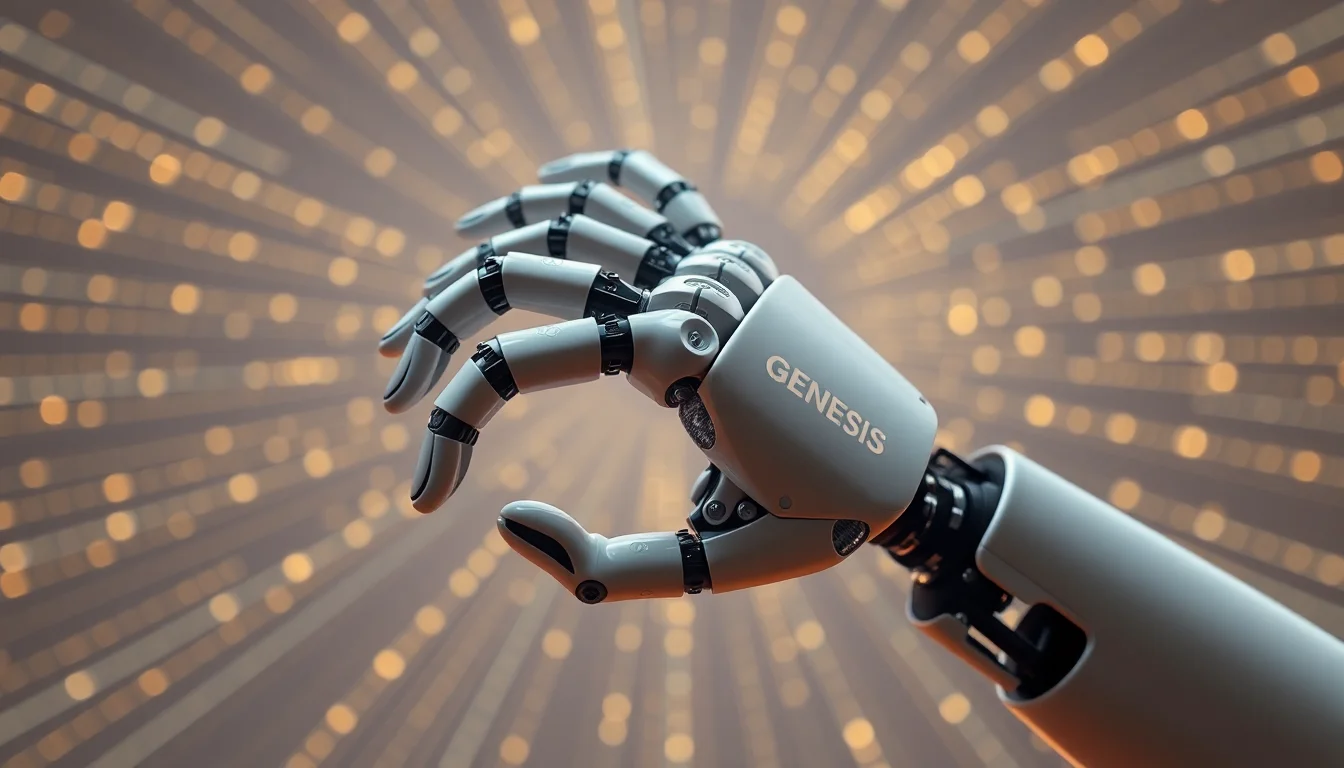

Khosla-backed Genesis AI is making a deliberate break from the software-first posture that has defined much of the AI-for-robotics market. With the debut of GENE-26.5 and an in-house humanoid-sized robotic hand, the company is signaling that it no longer wants to treat hardware as a downstream integration problem. Instead, it is going full-stack robotics—owning the model, the hand, and the simulation loop/system that links the two.

That matters because robotics AI is not just a scaling problem in the way language models are. In manipulation, every useful improvement in policy quality has to survive contact with physical friction, latency, sensor noise, actuator limits, and the plain fact that real objects break differently than simulated ones. Genesis is betting that a tighter hardware-and-model co-design loop can shrink that gap faster than a model-only strategy would.

According to the company’s own framing, the model remains the center of gravity. CEO Zhou Xian’s line that “the model has always been the goal” is a useful clue: Genesis is not abandoning the frontier-model logic that dominates modern AI. What changed is the implementation philosophy. After deciding it needed control over the hardware, the company moved to a full-stack approach intended to speed iteration through its simulation system. That is a meaningful shift in robotics engineering because it places data generation, control policy refinement, and mechanical design inside the same feedback cycle rather than across separate vendor boundaries.

The newly shown hand is the other half of the story. Genesis says its in-house hardware is humanoid-sized rather than built around the two-finger grippers that many robotics companies have leaned on. That choice is not cosmetic. A hand that matches human dimensions is a stronger testbed for manipulation tasks that resemble real work: grasping everyday objects, handling tools, and operating in environments designed for people. It also reduces one of the most stubborn sources of sim-to-real error: if the hardware’s geometry, range of motion, and contact surfaces resemble human conditions more closely, the training and evaluation environment has a better chance of reflecting deployment reality.

This is where hardware-and-model co-design becomes more than a buzzword. In a unified stack, the team can revise a mechanical component, regenerate simulation data, retrain or fine-tune the model, and immediately test whether the new configuration improves grasp success or task completion. In principle, that shortens the cycle between hypothesis and evidence. In practice, it also creates a more demanding engineering discipline. Every change has to be validated not just for benchmark performance, but for reproducibility, safety, thermal behavior, wear, calibration drift, and whether the simulated gains actually persist across multiple physical units.

Genesis’s approach also changes its competitive posture. The company sits in a crowded zone that includes other well-funded players at the AI-robotics boundary such as Physical Intelligence and Skild AI. But unlike software-first teams that may optimize for platform intelligence and rely on third-party hardware, Genesis is arguing that depth comes from ownership of the hand itself. That is a credible strategic claim, especially in manipulation, where the interface between intelligence and actuation is often the product. If the hand is the bottleneck, controlling the hand can become a form of control over the roadmap.

The downside is equally clear. Hardware control is a moat only if the company can afford the operational complexity that comes with it. Building a humanoid-sized robotic hand is not the same as shipping a demo unit. It pulls in manufacturing tolerances, supply-chain resilience, part substitution, field serviceability, and certification questions that software companies can postpone. It also raises the capital intensity of each iteration. A company can improve a model faster than it can redesign a finger joint, and a simulation loop only helps if its assumptions stay close enough to physical behavior to be useful.

Genesis appears aware of that tradeoff. The demo video reportedly shows advanced tasks being performed by the in-house hands, which is the right kind of signal to show at this stage: not a broad product promise, but a concrete link between the model and manipulation performance. Still, a demo is not the same thing as a deployment pipeline. The company has not yet disclosed go-to-market plans, rollout cadence, or the manufacturing and testing partnerships that would indicate how it plans to turn a research stack into a durable platform.

The next 12 to 18 months should reveal whether Genesis can turn its simulation-led iteration into a genuine advantage. The most useful signals to watch are not just larger demos, but whether the company can show:

- steady improvement in real-world task performance, not just in simulation;

- faster iteration cycles from hardware change to model update to validated behavior;

- evidence that the humanoid-sized hand performs reliably across repeated trials and varied objects;

- clear safety and reproducibility practices as the system moves beyond controlled demos;

- and eventual manufacturing or testing partnerships that indicate the stack is ready to scale.

If Genesis can sustain that loop, the full-stack strategy could matter in a way that pure model releases often do not. Robotics is ultimately an integration game, and integration is where control over hardware, data, and simulation can compound. But the same integration also exposes every weak point at once. That is the bet Genesis is making with GENE-26.5: that owning more of the stack will compress the path from model breakthrough to usable manipulation faster than a narrower approach ever could.