Lede: Orbiting into production — what changed and why now

Kepler Communications has shifted orbital compute from pilots toward production-grade capability by deploying 40 GPUs in Earth orbit. Sophia Space is the latest customer, signaling tangible use-cases beyond demonstrations. TechCrunch AI's coverage on 2026-04-13 framed the milestone as the largest orbital compute cluster currently in operation and noted that the system is open for business.

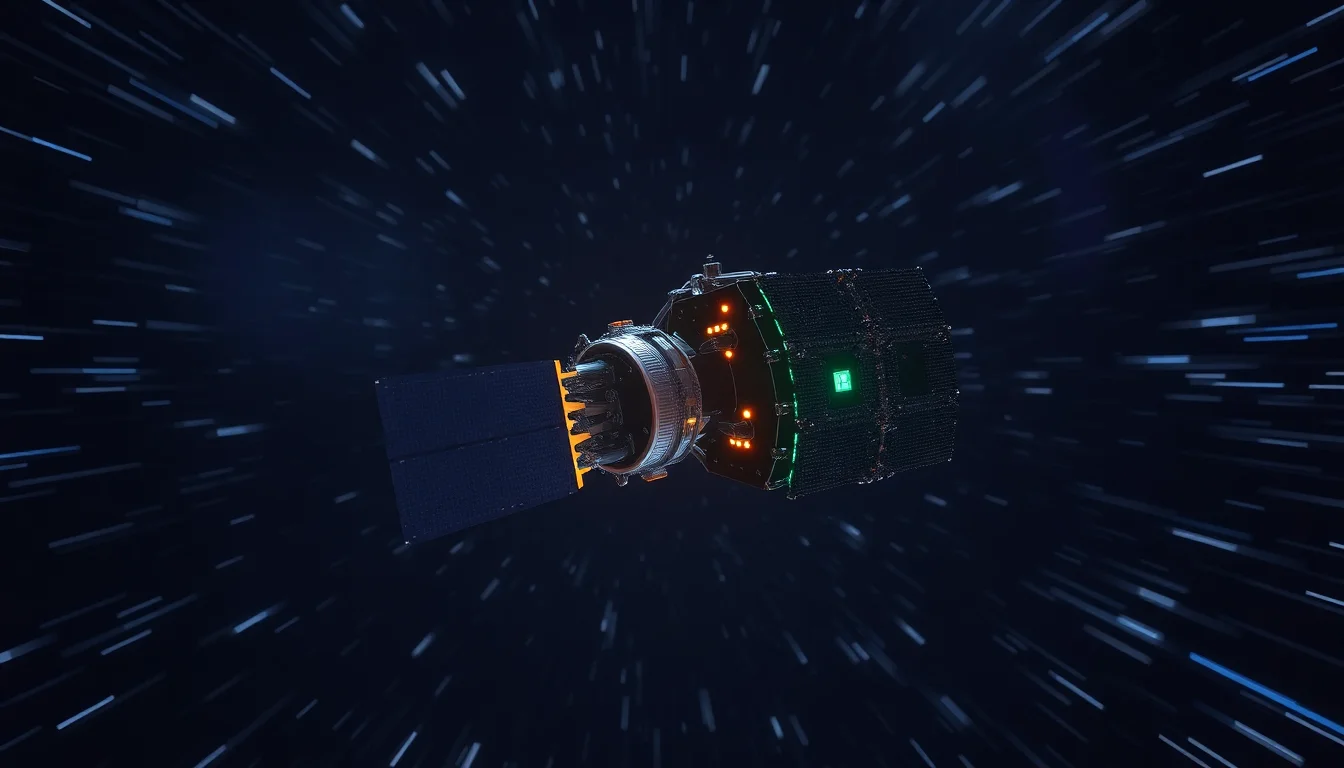

Technical architecture and capabilities

The cluster operates in Earth orbit with 40 GPUs in a single payload. The arrangement points to a new class of edge and in-space compute, where radiation, power budgets, and the realities of uplink and downlink bandwidth shape operational design. The ground segment remains essential for orchestration, software updates, and data routing, but the core compute now resides in space.

Implications for AI product rollouts and tooling

Orbit-native workloads could decouple certain AI cycle times from terrestrial networks, demanding orbit-aware software pipelines, security models, and performance benchmarks. The deployment invites a rethinking of CI/CD, model update cadence, and data governance in contexts where the compute fabric itself resides in orbit.

Market positioning and competitive landscape

With Sophia Space as a customer, Kepler positions itself as the reference kit for space-based AI services. This milestone could influence how providers price orbital services, what SLAs look like, and how data-handling norms develop for in-space compute.

Risks, costs, and open questions

Power budgets, radiation tolerance, lifecycle maintenance, insurance, and regulatory clarity are ongoing questions that will determine whether orbital AI emerges as a scalable model or remains niche. The field will need to establish clear risk models and operational standards as more actors enter the space.