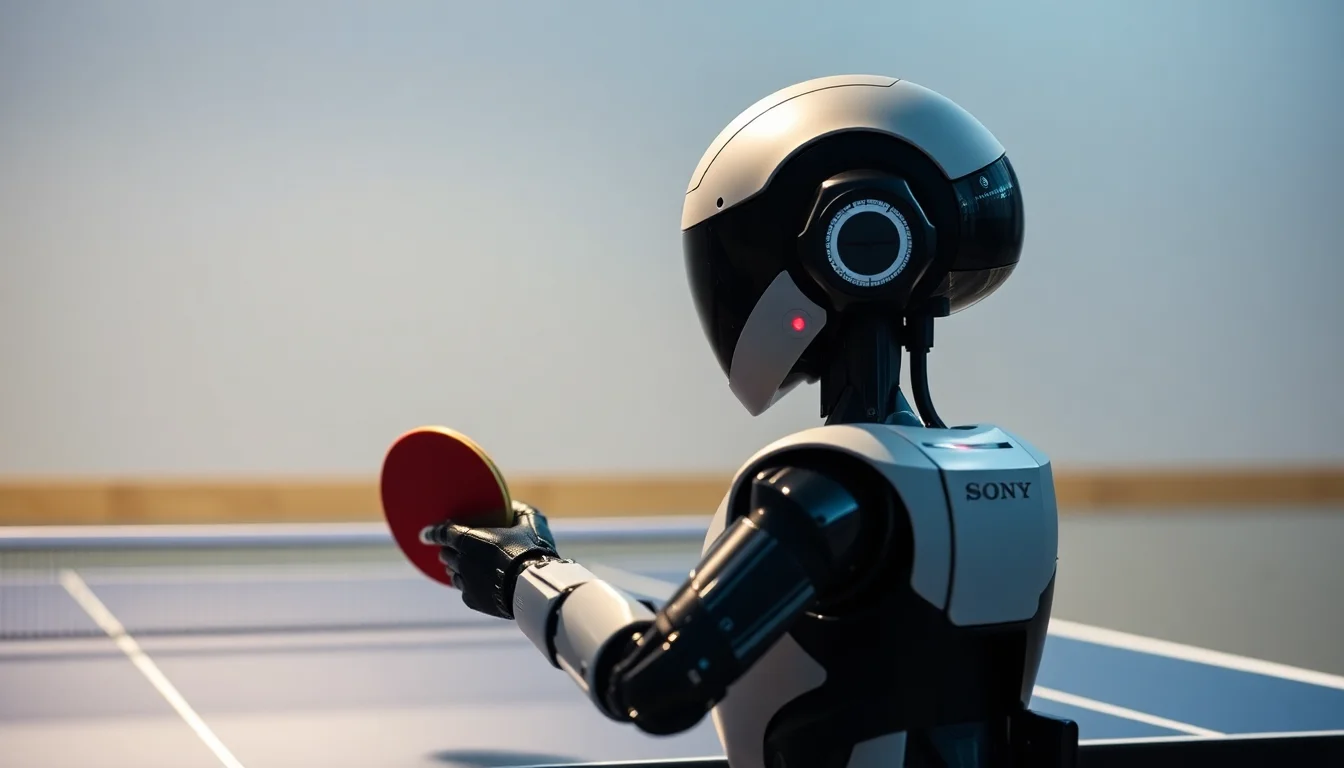

Sony AI’s Ace has done something the robotics field has been chasing for years: it beat elite human table-tennis players under ITTF rules in the physical world, not in simulation or a scripted demo. That matters because table tennis compresses almost every hard problem in embodied AI into a few milliseconds at a time. The ball arrives fast, spins unpredictably, and leaves very little room for correction once the robot commits to a swing.

In that sense, Ace is not just a sports robot. It is a benchmark for real-time physical AI. The result, described by Sony AI and framed in Nature as a major breakthrough, shows that a machine can close the perception-to-action loop quickly enough to compete in a domain where reaction time, continuous estimation, and precise actuation all have to line up.

The important technical detail is the pace. Table tennis forces perception, planning, and control to operate on millisecond budgets. That means the system has to detect the ball, infer trajectory and spin, decide on a return, and execute the motion with enough fidelity that the paddle arrives where the planner intended. Any slippage in the chain — sensor delay, model latency, controller jitter, actuator lag, or a bad estimate of the opponent’s shot — turns into a missed return.

Ace reportedly combines a high-speed perception system with rapid decision loops and precise physical control. That combination is the core of the advance. The field has long had strong results in digital environments, where compute is abundant and state is clean. Physical AI is different: the world does not pause while the model reasons. If the robot cannot maintain a tight perception–planning–control loop, the model’s intelligence never reaches the actuator in time to matter.

That distinction is why Ace feels like a production-relevant milestone rather than just a headline. In robotics, “works in the lab” is not enough. Systems that operate in dynamic environments need disciplined latency budgets, deterministic enough control stacks, and enough reliability that repeated execution does not degrade under pressure. In practical terms, that implies a hardware-software stack built around fast sensors, low-latency inference, tightly integrated controllers, and data pipelines that can support both training and validation without introducing timing variance.

This is also where the tooling implications become concrete. The next wave of robotics platforms will be judged less by isolated model accuracy and more by end-to-end throughput: how fast sensor data is ingested, how quickly state is estimated, how stable the planner behaves under changing inputs, and how reliably commands are translated into movement. For physical AI, the benchmark is not just whether the model predicts well. It is whether the full stack can do so repeatedly, within a hard real-time envelope, under noisy and adversarial conditions.

Ace suggests that the industry is moving toward a new reference architecture for autonomous robotics: high-rate perception, fast closed-loop planning, safety-aware control, and tightly managed data flow between them. That has implications for sensor vendors, controller designers, and the platforms that collect, label, and replay robot interaction data. It also shifts the conversation from model capability in the abstract to system integration in the field, where timing, calibration, and fault tolerance matter as much as raw intelligence.

Sony AI now has a recognizable platform benchmark. Even if table tennis remains a specialized domain, the engineering lesson is broad enough to matter to robotics teams building for warehouses, labs, factories, and other structured environments. If a robot can hold up under professional rules in a high-speed sport, then the surrounding stack — sensing, compute, motion control, and runtime orchestration — has reached a level of maturity that competitive robotics groups will want to study closely.

That does not mean the result generalizes cleanly to every task. It does not. Table tennis is a demanding but constrained environment, and there is still a large gap between a robot that can dominate a rally and one that can safely and reliably operate across diverse, unstructured settings. Generalization, long-horizon autonomy, and deployment outside controlled conditions remain open problems.

There are also the practical questions that follow every leap in embodied AI: how robust is the system over time, what happens when sensors degrade, how are safety boundaries enforced, and what kind of regulatory scrutiny comes with fast autonomous machines operating near people? Those concerns will matter more, not less, as the field pushes from competitive demonstrations toward products.

Even so, Ace marks a clear change in the narrative. The line between digital AI success and physical-world deployment is getting thinner. A robot that can win under ITTF rules is not a universal solution, but it is a strong proof that millisecond-scale perception-to-action is no longer a theoretical target. For robotics builders, that turns latency, reliability, and control integration into first-order product requirements rather than afterthoughts.