Festo’s new GripperAI is aimed at one of industrial automation’s most stubborn problems: how to handle changing product mixes without turning every cell into a custom engineering project. The company is pitching the software as a universal AI layer for mixed-product handling, with the practical promise that robots can adapt to variable items without bespoke programming, SKU-specific templates, or repeated hand tuning.

What makes that notable is not just the use of AI, but where the intelligence sits. GripperAI runs locally on a standard industrial PC paired with a 3D camera, rather than relying on a remote service or a cloud-mediated workflow. That edge-first design matters because mixed-product cells are often judged on latency, determinism, and integration burden as much as on raw accuracy. By keeping perception and decision-making on-site, Festo is framing GripperAI as an automation tool designed for production environments, not as an experimental vision demo.

Edge-first disruption: what changes now

For factories handling heterogeneous items in the same machine, the historical pattern has been familiar: repeated programming, application-specific integration, and expensive 3D camera setups for each use case. Those requirements create friction before a cell ever reaches steady operation, and they make reconfiguration slow when product lines change.

GripperAI attacks that setup cost directly. Instead of loading templates for each SKU, the system is designed to adapt automatically to mixed products. The immediate shift is architectural as much as operational: the gripper logic becomes a software capability layered onto standard industrial hardware, rather than a bespoke control package built around a fixed catalog of parts.

That is a meaningful change for technical teams because it moves mixed-product handling closer to a reusable deployment pattern. The value is not just in picking items; it is in reducing the amount of application-specific coding that traditionally sits between a robot arm and a workable cell.

How the system works

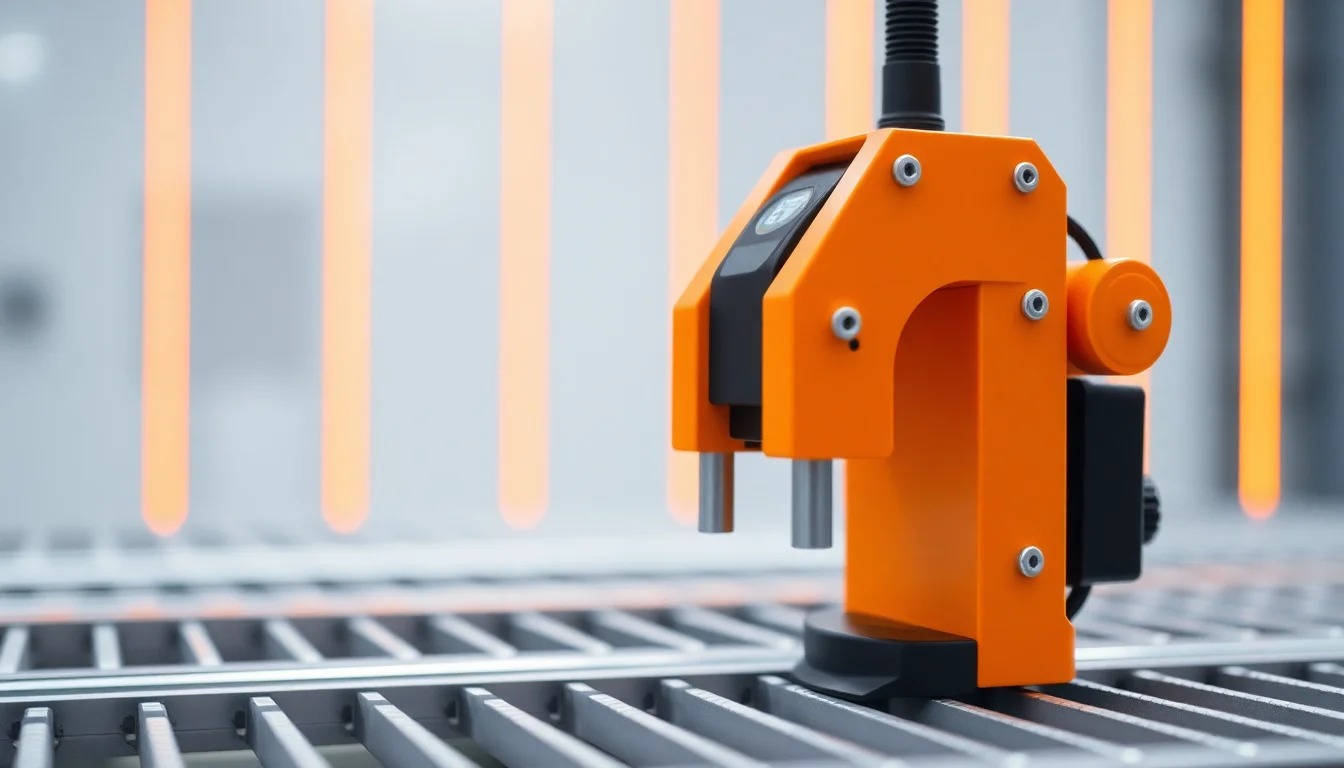

At the system level, GripperAI combines a 3D camera, a local industrial PC, and the robot’s control stack. The AI software processes the scene at the edge, where it can analyze the geometry of each item without sending data off-site for inference.

For each sighted object, the software automatically computes gripping points. If multiple tools are available, it also selects a suitable tool before handing off execution to the robot. Motion control then carries out the move.

That division of labor is important. GripperAI is not replacing the robot controller; it is inserting an AI decision layer upstream of it. Perception and grip planning happen in software, while tool selection and path control remain tied to the robot’s execution chain. In other words, the system is trying to automate the most variable part of the job—the choice of how to grasp a specific item—without disturbing the deterministic machinery that moves the arm.

Because the software runs locally, deployment can be treated as a cell-level integration problem rather than a networked AI rollout. That may sound like a modest distinction, but in industrial environments it often determines whether a system is considered manageable by operations teams.

Deployment workflow and integration friction

Festo’s description of deployment is intentionally conventional. Integrating GripperAI involves mounting and aligning the camera, verifying that lighting is usable, calibrating the robot base to the camera’s frame, and configuring the software’s pick parameters.

That sequence will be familiar to anyone who has integrated vision into a robot cell. The difference is that the setup appears to stop short of item-by-item programming. Previously, mixed-product environments often required repeated programming and expensive setups, especially when a single cell had to deal with a range of variable products inside the same machine.

The practical implication is that the front-end work shifts from writing and maintaining SKU logic to getting the sensing stack physically and geometrically right. Camera alignment, illumination, and calibration remain nontrivial. But once those conditions are satisfied, the system is intended to generalize across products rather than requiring a new template every time the mix changes.

That should matter to deployment teams because it compresses the amount of specialized engineering attached to each new application. Even if integration still requires careful calibration, the software architecture lowers the amount of bespoke logic that typically stretches project timelines.

Market and competitive implications

GripperAI also signals a broader shift in how value may be distributed across the automation stack. If a universal AI-based software layer can handle a range of items without custom programming, then some of the differentiation that once sat in application-specific integration starts moving into software and deployment know-how.

That does not eliminate the role of integrators or hardware suppliers. It changes where their effort is concentrated. Instead of building one-off SKU trees and maintaining tightly scoped program logic, they may spend more time on camera setup, calibration discipline, and validation of edge inference in real production conditions. The software layer becomes more strategic because it absorbs variability that used to be handled manually.

For end users, the appeal is obvious: a system that can be adapted to mixed-product workflows without turning every change into a reengineering task. For vendors, the risk is equally clear. When cells become easier to configure, the premium associated with custom application work may narrow. In that sense, GripperAI is not just a product release; it is a sign that mixed-product robotics is moving toward a more standardized software-defined model.

Risks, questions, and the path forward

The promise of edge-based AI in production still comes with practical constraints. GripperAI depends on the same fundamentals that constrain many vision systems: usable lighting, clean optics, and stable camera calibration. Those are not incidental details. They are the conditions that determine whether the perception layer stays reliable after deployment.

There are also operational questions that any factory deploying AI-controlled grasping will need to confront. Model updates have to be managed carefully. Perception performance needs ongoing monitoring. And because the AI’s behavior is now part of the control path, organizations will need clear ownership for auditing, maintenance, and safety validation.

That is where the narrative tension around products like GripperAI sits. The software is pitched as a way to remove bespoke programming and SKU templates from mixed-product handling, and the technical design supports that claim. But the final test is not whether the system can identify a part in a demo cell. It is whether teams can keep the edge stack stable, calibrated, and explainable as products, lighting conditions, and production demands change.

If Festo’s approach holds up in real deployments, it points to a more general pattern in industrial AI: the most valuable systems may be the ones that make robotics less like software integration work and more like infrastructure—configured once, monitored continuously, and expected to adapt without rewrites.